XR World Weekly 001

Highlights

- Godot 4.0 Released!

- Vid2Avatar: A Method to Generate High-Quality Virtual Human Models from Video

- SushiBen- A VR Game That Organically Combines Comic Paneling and VR

Big News

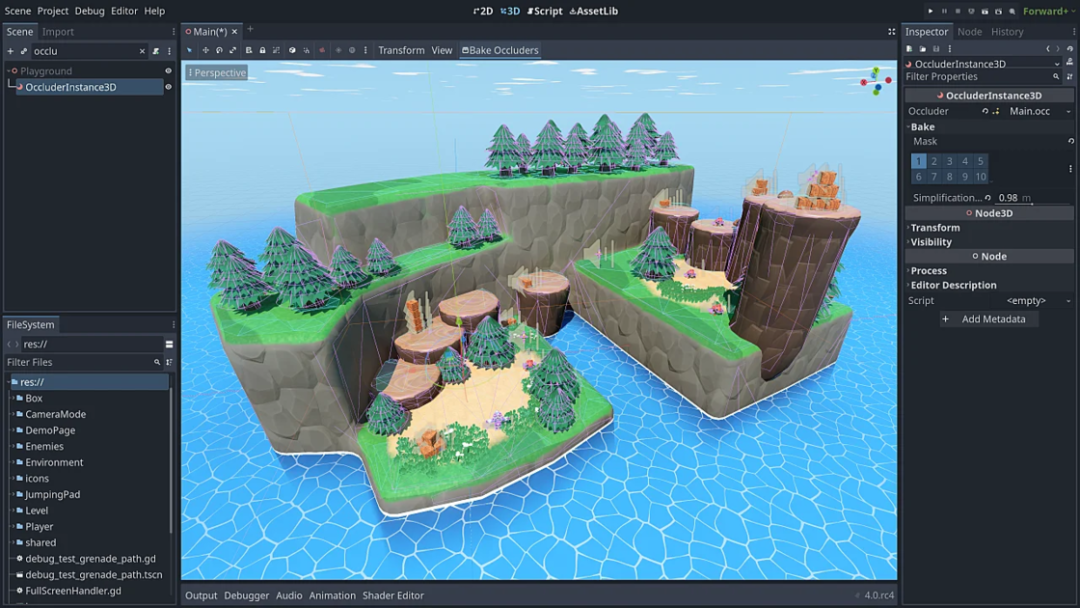

Godot 4.0 Released!

Keywords: Game Engine, WebXR, OpenXR

Godot is a feature-rich cross-platform game engine for creating 2D and 3D games. Although not as popular as Unity and Unreal, its completely free model, low learning threshold, and fully open source nature attracts many loyal fans! (PS: Godot is said to have a very good reputation in 2D game programming scenarios)

On March 1, 2023, the Godot team released Godot 4.0. This long-awaited version brings many major updates, such as the introduction of Vulkan, the brand new SDFGI technology, the new 2D level editing tool tilemap, improved shader editor, etc. But what excites me the most are Godot’s new XR features!

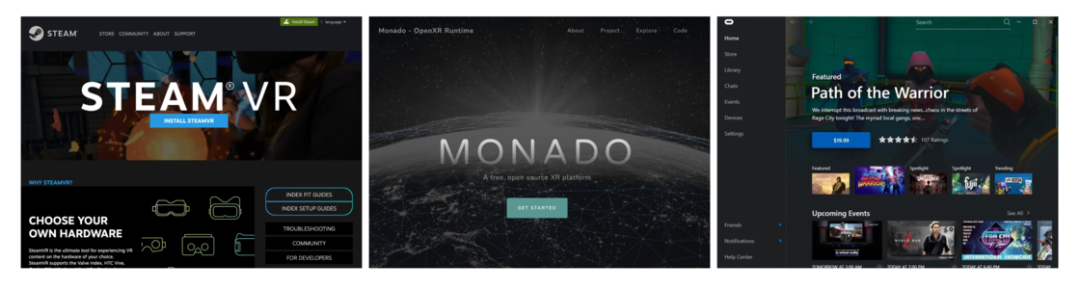

First, OpenXR is now embedded in the core of the Godot engine, so developers no longer need third-party plugins to build XR projects. Godot will support all major PC headsets, including SteamVR on Windows and Linux, Oculus on Windows, and Monado on Linux.

For developers targeting Android headsets, the Godot team also provides an official plugin called Godot OpenXR Loader to support Meta Quest, PICO 4 and other VR devices. Meanwhile support for the Magic Leap 2 headsets, OpenXR compatible HTC headsets, and the brand new Lynx R1 AR headset is also in the works.

And super cool is that Godot engine now also supports WebXR, allowing developers to develop and run XR games and apps in web browsers.

Then, Godot’s team and community developers jointly incubated a toolkit called Godot XR Tools, which provides many convenient component libraries for developers, such as locomotion in virtual scenes, displaying hands synchronized with player controllers, easy object picking, etc. This allows developers to quickly prototype XR games in Godot.

What’s more exciting is that these tools come with complete documentation and instructions. And to further lower the threshold, the Godot team also provides a standard project template! (This is so thoughtful, hope Unity team can catch up quickly)

At the same time, the official team also mentioned community member teddybear082 using Godot XR Tools to implement VR ports for multiple open source projects, demonstrating how easy and efficient it is to use the toolkit. In this tweet by TeddyBear082, he ported the game Cruelty Squad.

After studying these features of Godot 4.0, I’m filled with yearning for this game engine. Perhaps in the near future, I’ll share some first-hand experiences using it with you.

Vid2Avatar: A Method to Generate High-Quality Virtual Human Models from Video

Keywords: 3D digital human body reconstruction, monocular RGB video, Chinese researchers

A team led by Dr. Chen Guo from ETH Zurich (ranked 4th in Engineering and Technology in the 2021 QS World University Rankings) recently published a research paper titled “Vid2Avatar: 3D Avatar Reconstruction from Videos in the Wild via Self-supervised Scene Decomposition”.

Although the paper is filled with esoteric terminology and magical math formulas, through its introductory video, we can roughly understand that this technology quickly creates 3D human models from a monocular RGB video. In more professional terms, it is a high-fidelity 3D digital human body reconstruction algorithm.

Of course, we won’t go into the details of the paper here, but rather explore the potential value and practical significance of this type of technology.

In fact, 3D digital human body reconstruction technology has long been applied in our daily lives. For example, this technology was used in Furious 7 (2015) to resurrect the late actor Paul Walker.

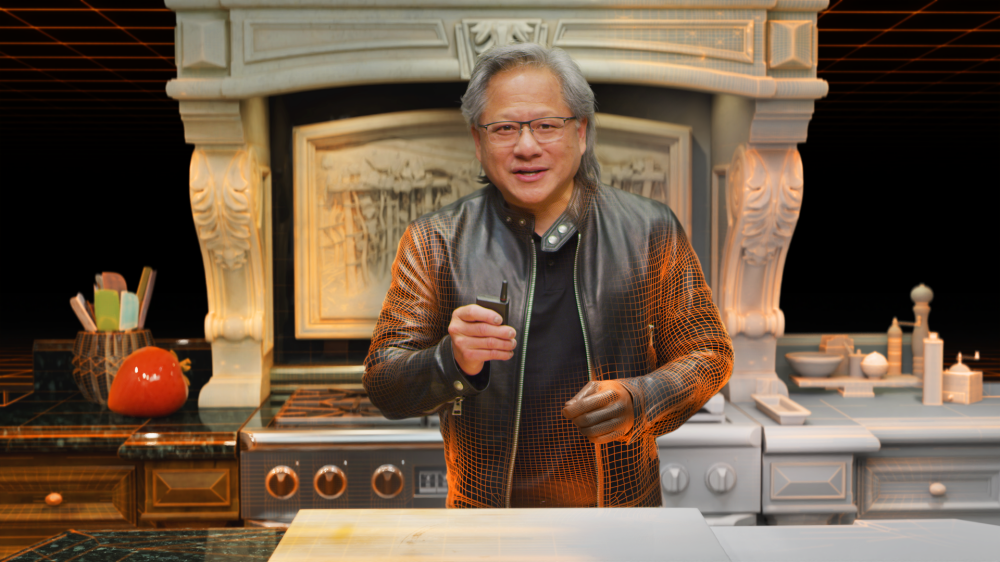

Although this technology seems to have existed long ago, its cost of use is not low. As an example, at the 2021 GTC conference, Nvidia held a product launch event with a hyper-realistic virtual human that caused widespread attention and discussion in society!

According to Nvidia’s own introduction, in order to ensure the realism of Lao Huang (Jen-Hsun Huang) in the keynote video, 34 3D artists, 15 software researchers were mobilized, and 21 different versions of fake Lao Huang were created. The one finally presented to us was chosen as the most ideal.

In this version, Nvidia integrated various modeling, editing, driving and rendering technologies, and relied on industrial-grade high-spec capture equipment to ensure the geometric and texture accuracy of the reconstructed 3D human body. Only after a long period of time did it achieve the visually deceptive effect shown below.

Therefore, the high labor costs, time costs, and technical complexity and professionalism inevitably make such methods difficult to promote to the general consumer market.

Back to Dr. Chen Guo’s research achievement (Vid2Avatar), this type of technology greatly reduces the cost of using virtual avatars, because with the popularity of mobile devices, monocular RGB data has become ubiquitous. Relying solely on monocular RGB video data, common objects’ high-quality animatable digital counterparts can be obtained efficiently and conveniently, which will truly promote the application and development of virtual avatars and related technologies. And this is also one of the urgent problems to be solved in the XR field.

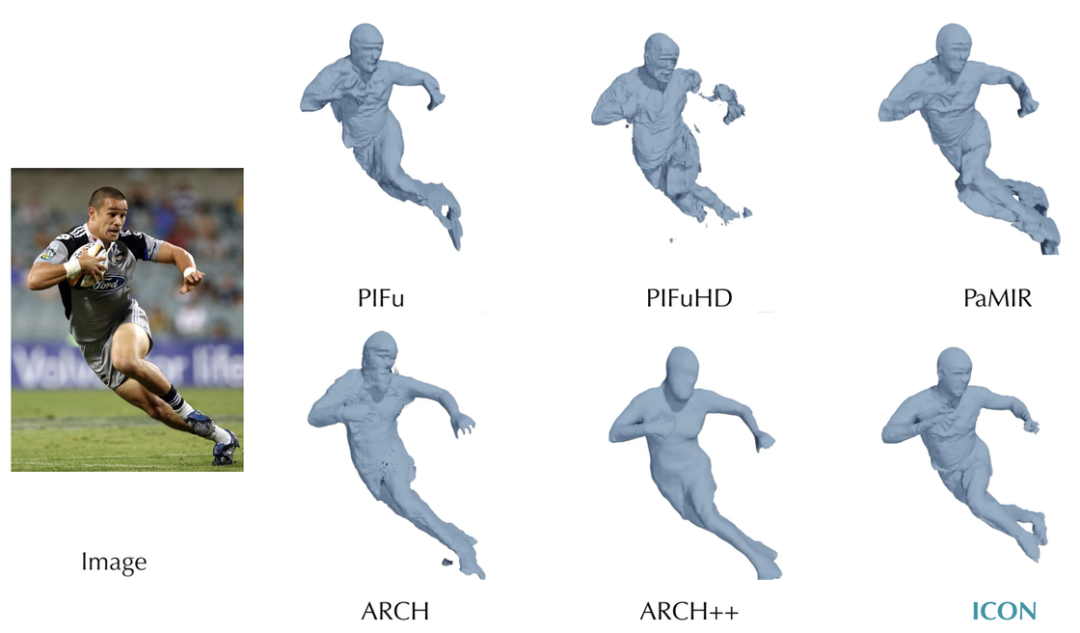

The paper also mentions several algorithms for 3D digital human body reconstruction based on video, such as SelfRecon, ICON, etc. Of course, there are also many algorithms in academia for 3D digital human body reconstruction based on images, such as PIFu, ARCH, PaMIR, etc. Moreover, these technologies have mushroomed in recent years, leaving us full of anticipation and fantasy!

At the same time, we can see many top Chinese universities in these research teams, such as Tsinghua University, University of Science and Technology of China. Even in papers published by these non-Chinese universities, we can see many Chinese researchers, such as today’s protagonist Dr. Chen Guo.

Although we still cannot see the future, at least we see the active exploration of Chinese researchers in this field, which makes me genuinely proud and gratified, especially against the extremely special historical backdrop of this era!

Alright alright, we’d better rein in this feeling of pride!

Finally, let’s look forward to these technologies being applied to our lives as soon as possible! Maybe the next live event from Minority can have a high-fidelity virtual avatar version of Old Mai!

Idea

SushiBen — A VR game that organically combines comic paneling and VR

Keywords: Anime, comic style, VR game

Some more work in "pongress" of my VR game's ping pong mini game! 🏓🏓#VR #UE4 #indiegame #gamedesign pic.twitter.com/XwweOAqitG

— Dmaw⛅ (@DmawDev) January 2, 2023

In this video, we can see this is a VR version of a ping pong game. But compared to other types of ping pong games, this game is full of anime style, not only in the details of characters, environment etc., but also very attractive visually.

What’s really unique is that during gameplay, all kinds of comic style illustrations are inserted into the UI as the intensity of the match and actual operations change, making the gameplay very interesting, as if entering some hot-blooded anime!

Compared to current VR games on the market, this presentation makes the tension of the match more pronounced, and can react to the opponent’s emotions more intuitively (finally not facing an emotionless paddle swinging machine).

This video demo went viral on Twitter recently. It’s from a VR game Sushi Ben currently in development by VR game studio Dmaw, made with Unreal Engine. Although the released footage is a ping pong game, according to Dmaw it’s actually just a small part of the full game, but this comic style will run through the entire storyline.

Before publishing, Dmaw released another demo video on his Twitter, showing more gameplay like fishing and catching bugs with anime illustrations, which is really anticipated! And from the Twitter tags this game may also come to PSVR 2.

Here's our first gameplay trailer!

— Dmaw⛅ (@DmawDev) February 23, 2023

Also make sure you watch to the very end to see the reveal of the the games writer.#PSVR2 #VR #UE4 #indiegame pic.twitter.com/Qh9KgYrAZX

Of course, beyond the highlights of the game itself, its design style with comic illustrations is also quite eye-opening, and gives me many interesting ideas!

For example this design style suits porting some classic sports IPs very well, like Slam Dunk, Captain Tsubasa, Major (sorry for revealing my age).

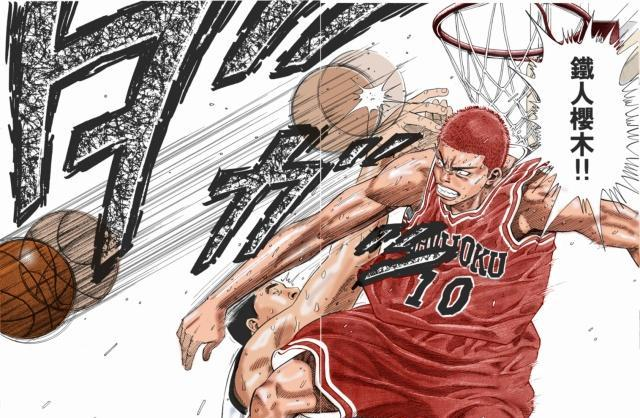

Imagine a Slam Dunk VR game, faithfully reproducing classic comic scenes through specific scenarios and triggers, like Sakuragi giving Miki his lunch box cover below!

Let’s envision in this VR game, you’re playing Sakuragi on defense. As you jump up high, not only is Miki there, but illustrations float in the remaining space, with Haruko’s expectant gaze, Coach Anzai’s kind smile, Sakuragi’s army cheering, etc. Aside from visuals, as the music builds to a climax, wouldn’t you feel pumped, wouldn’t you want to smash the ball in front of you!

This sense of immersion not only faithfully reproduces the comic scenes, but also lets players relive the characters’ inner emotions. This would be a much more exciting game experience than just playing basketball. Really looking forward to it!

Canned Drinks Can Also Play Games

Keywords: cans, curved display, games

缶がゲームのディスプレイになるAR体験が面白い

— IVAN@AR × Marketing (@van_eng622) January 24, 2023

こういうキャンペーン本当にここ1、2年で出てきそう!pic.twitter.com/y3ZZ4otYq4

Although cans have appeared in many existing AR apps, they are usually just plain triggers — after users scan the pattern on the can, the subsequent process has nothing to do with the can itself.

But in fact, the can itself can also be the “main arena” of an AR game. The standard shape of cans is especially suitable for hosting a complete AR scene.

For example, in the video below, after the user scans the can, the can itself becomes a 3D game console screen, completely covered by a black game background. The whole can is like a mini curved display, only the display is concave instead of convex. Next, the user can directly face this small curved screen and start a creative new space shooter game.

By turning the can into an integral part of the AR experience, it creates a novel game style that takes advantage of the can’s physical attributes. This is a clever example of elevating a mundane object into an AR gaming platform. More ideas like this can continue expanding the creativity of AR applications.

Tool

Spline: A Lightweight 3D Online Collaborative Design Tool

Keywords: lightweight, asset library, localization

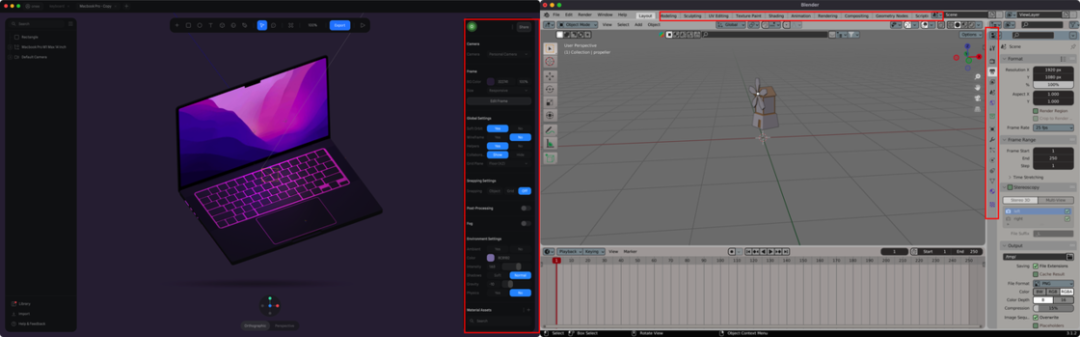

Spline is a lightweight online collaborative 3D design tool that combines modeling, materials, interactions, animations and code delivery. If the concept of “lightweight” is unclear, we can think of Spline as Figma in 2D design, and Blender as Photoshop in 2D design.

To make it less abstract, let’s compare the interface complexity between Spline and Blender:

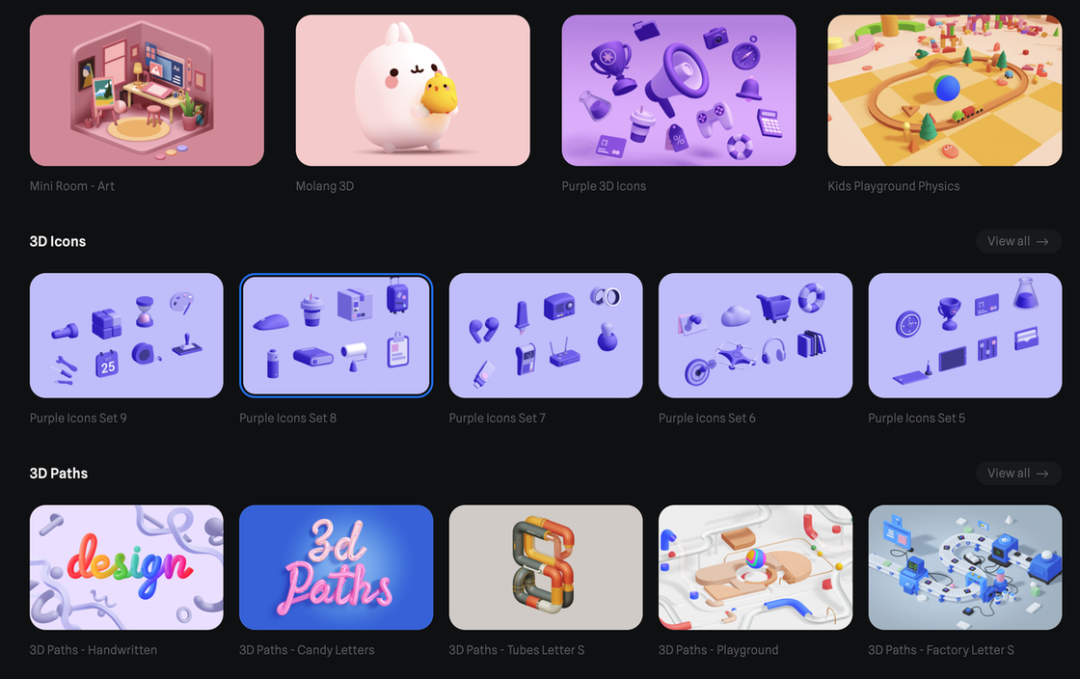

Although interface complexity also implies functionality, for many creators who just want to make simple 3D effects, Blender is undoubtedly a heavy-duty knife. In addition, Spline also provides a very rich asset library, which undoubtedly greatly lowers the threshold for creators to make exquisite 3D scenes.

Spline was initially positioned as a lightweight 3D design tool targeting scenarios where 3D elements are needed on webpages or product promotional images. Therefore, in earlier versions, Spline only supported exporting 3D models as images or public URLs, which was very unfriendly for AR developers who wanted to apply it in their own workflows.

In recent versions, Spline finally started supporting exports in 3D formats like GLTF/USDZ, the former commonly used on Android and the latter on iOS. For creators focused on lightweight AR scenes, this feature also means Spline can be gradually tested as the team’s 3D modeling tool integrated into their pipeline.

Apart from the above, another point worth recommending about Spline is its great localization efforts! You can see their content on WeChat Video Accounts, Xiaohongshu, Weibo and more, with very frequent updates. It even provides an official Chinese user manual specifically for domestic users.

Course

GenJi Really Wants to Teach You — Bilibili’s Simplest Spline Tutorial

Keywords: Spline, tutorial, systematic

After introducing Spline, it’s time to recommend some tutorials. Although the official Chinese user manual has provided explanations and tutorials for individual features, they feel more fragmented. If you prefer something more systematic, you can check out this 9-part Spline tutorial series by Teacher GenJi. Each video is around 10+ minutes, great for casual viewing after liking and subscribing.

Apple AR Reality by Tutorials/ARKit by Tutorials

Keywords: Apple, AR, Kodeco

Kodeco (Ray Wenderlich) has always been one of XReality.Zone’s favorite tech teams. Their “Apple Augmented Reality by Tutorials” is one of the best intro books for learning Apple AR technologies in our opinion.

Although there’s a feeling of just skimming the surface after reading it, that’s also because the AR knowledge system is too vast. For an intro book, going too deep may discourage readers, so this book is very restrained in presenting and expanding on concepts.

Of course if the $59.99 price tag is daunting, you can check out the free “ARKit by Tutorials” book. Although the content is a bit dated, it still helps understand Apple’s AR knowledge (can’t expect too much for free content after all).

Code

iOS app — AR Basic App

Keywords: iOS, AR, template project

Yasuhito Nagatomo is a Japanese developer we really like. He has published multiple AR apps on the App Store personally, and is also very active on Twitter, often sharing interesting perspectives. Recently he open sourced a new project on Github — AR Basic App.

Although the models in this app look very simple without complex features, the app shows developers many common issues to note when developing AR apps on Apple platforms, like nesting ARView in SwiftUI, managing AR session state, camera state, AR onboarding state, etc.

I think developers of all levels can gain some useful tips from this project!

Video

5 Mistakes Beginner VR Developers Often Make

Keywords: beginner, VR developer

This is a video series about common mistakes beginner VR developers make. The creator summarizes various issues he’s seen from new developers on Discord, forums and other communities.

In short, he believes beginner VR devs often make these mistakes:

- Jumping into VR without learning Unity basics first

- Neglecting programming fundamentals

- Watching too many tutorials without practicing themselves

- Over-optimizing too early in development

- Not publishing projects to get feedback

Roadmap for New AR/VR Developers in 2023

Keywords: learning path

If you follow XR development often, you must know Dilmer Valecillos. As a Microsoft MVP in Teaching XR, his learning advice is highly valuable for all developers! In his latest video, he discusses how beginners should learn AR/VR in 2023.

At first glance, the video seems like promotion for his new course. But another perspective is that his course outline is actually an optimal learning path for beginners.

The recommended dev tools in the second half are also very practical, covering pretty much all mainstream options today. No more confusion in choosing an SDK during development!

Article

Oculus VR Design Guidelines (Chinese)

Keywords: design guidelines, localization

https://nkul2ot3c0.feishu.cn/docs/doccnJCQWhdqpKhTxeQuhTEGDzb

Design guidelines are crucial for any platform. Like how Apple’s HIG birthed countless classic apps, Oculus as the biggest VR platform also provides a unified framework to ensure consistency and reliability of VR apps and content. So Oculus’ design guidelines are a must-read for every VR creator.

Luckily, Chinese product designer Frad and others have translated the guidelines into Chinese, and actively maintain the doc. If you haven’t systematically read such materials before, or struggle with long English passages, their localized version will be your best choice!

XR 基地

XR 基地